What is BirdNET?

How can computers learn to recognize birds from sounds? The K. Lisa Yang Center for Conservation Bioacoustics at the Cornell Lab of Ornithology and the Chair of Media Informatics at Chemnitz University of Technology are trying to find an answer to this question. Our research is mainly focused on the detection and classification of avian sounds using machine learning – we want to assist experts and citizen scientist in their work of monitoring and protecting our birds. BirdNET is a research platform that aims at recognizing birds by sound at scale. We support various hardware and operating systems such as Arduino microcontrollers, the Raspberry Pi, smartphones, web browsers, workstation PCs, and even cloud services. BirdNET is a citizen science platform as well as an analysis software for extremely large collections of audio. BirdNET aims to provide innovative tools for conservationists, biologists, and birders alike.

This page features some of our public demonstrations, including a live stream demo, a demo for the analysis of audio recordings, an Android and iOS app, and its visualization of submissions. All demos are based on an artificial neural network we call BirdNET. We are constantly improving the features and performance of our demos – please make sure to check back with us regularly.

BirdNET can currently identify around 3,000 of the world’s most common species. We will add more species in the near future.

Want to use BirdNET to analyze a large data collection? Go to our GitHub repository to download BirdNET.

Have any questions? Please let us know (we speak English and German): ccb-birdnet@cornell.edu

Learn more about BirdNET:

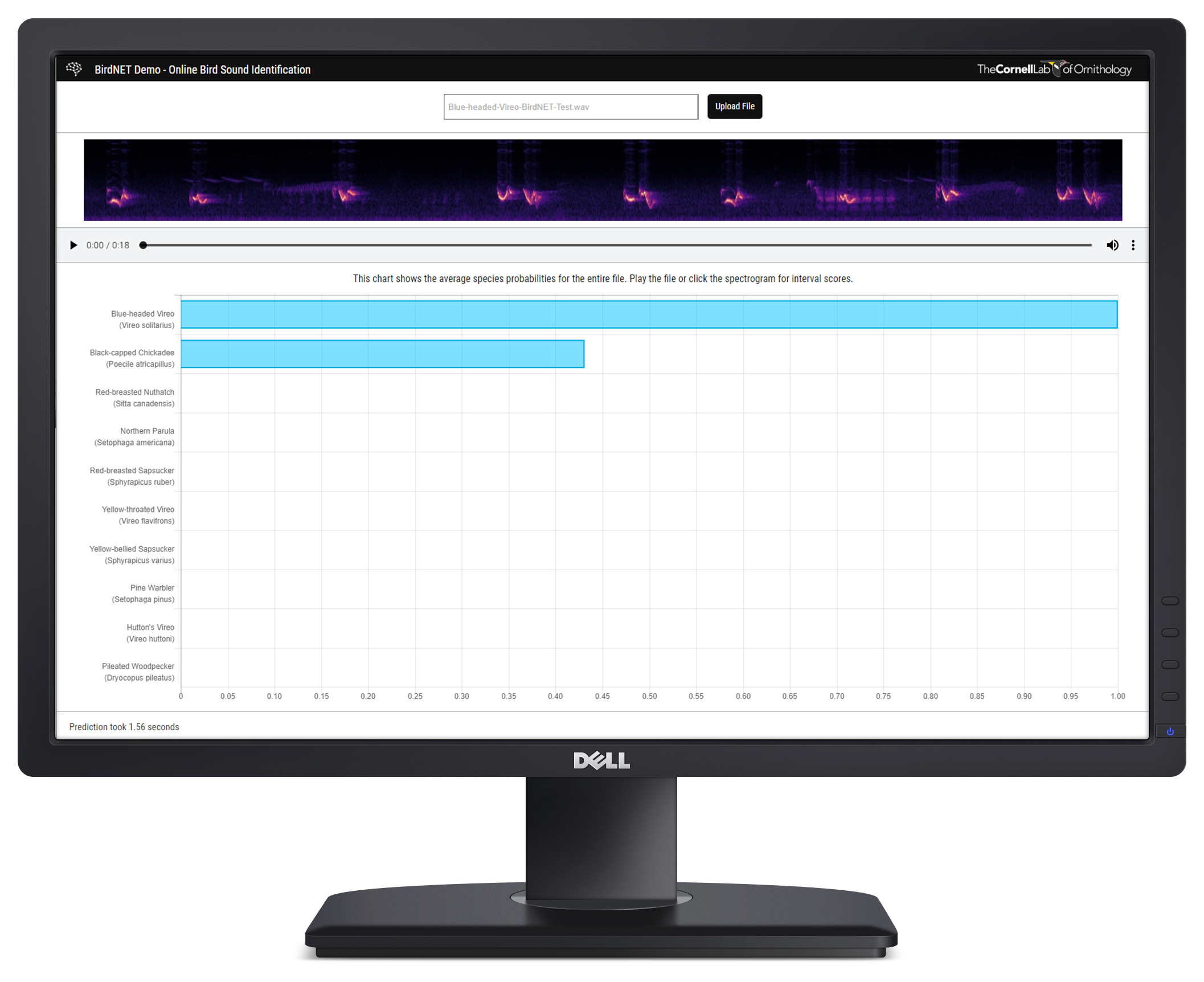

Analysis of Audio Recordings

Reliable identification of bird species in recorded audio files would be a transformative tool for researchers, conservation biologists, and birders. This demo provides a web interface for the upload and analysis of audio recordings. Based on an artificial neural network featuring almost 1,000 of the most common species of North America and Europe, this demo shows the most probable species for every second of the recording. Please note: We need to transfer the audio recordings to our servers in order to process the files. This demo is intended for large screens.

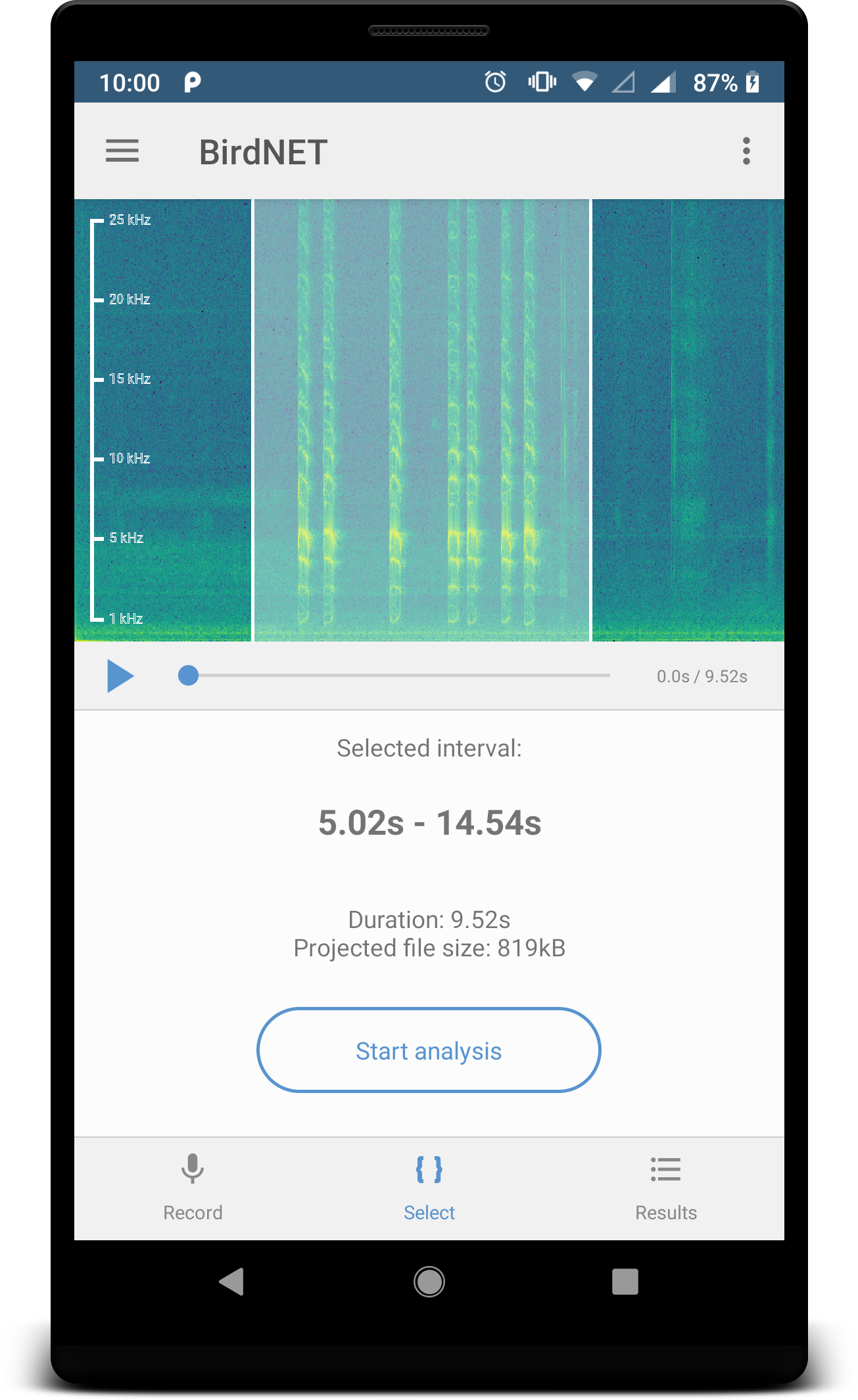

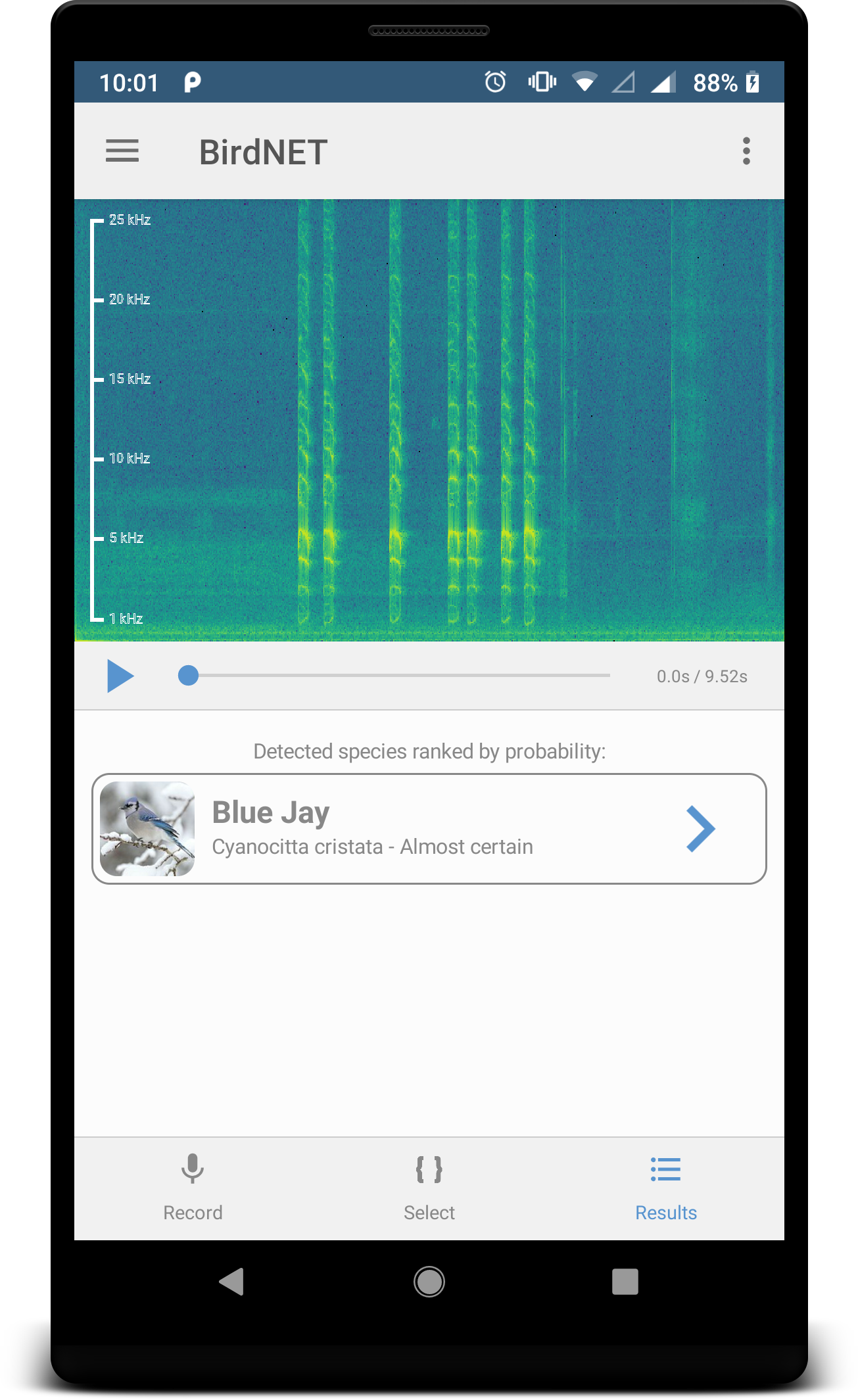

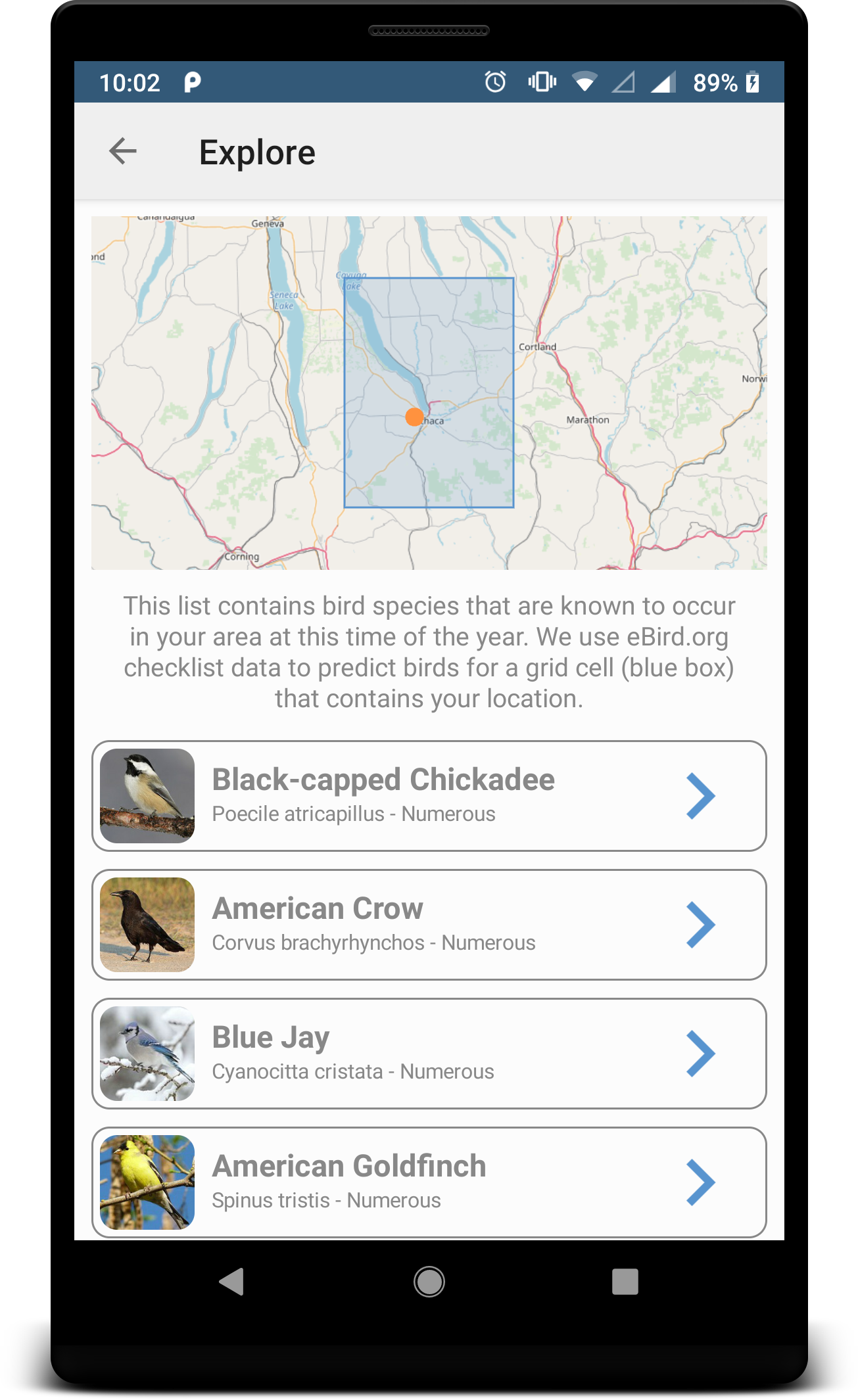

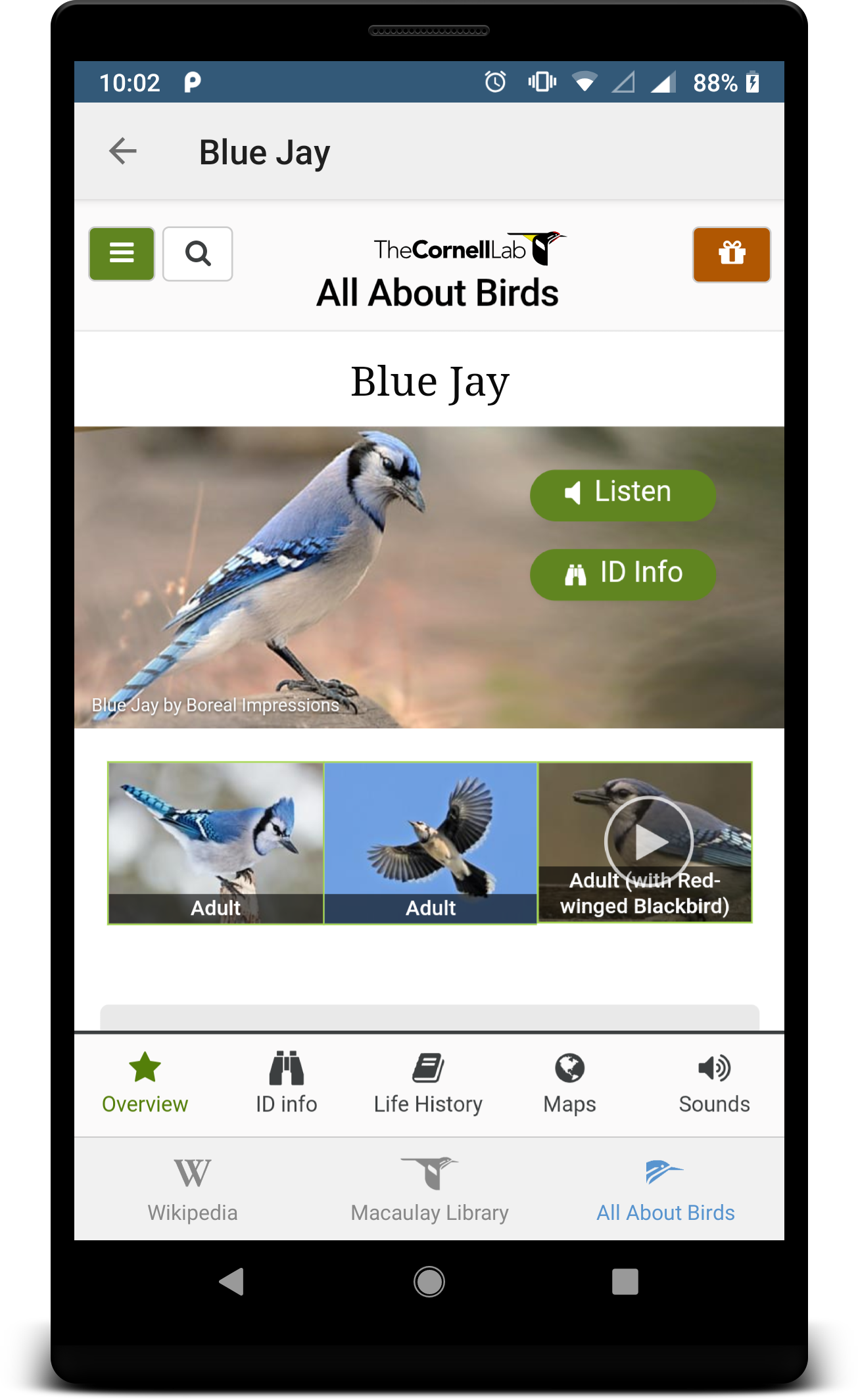

Smartphone App

This app lets you record a file using the internal microphone of your Android or iOS device and an artificial neural network will tell you the most probable bird species present in your recording. We use the native sound recording feature of smartphones and tablets as well as the GPS-service to make predictions based on location and date. Give it a try! Please note: We need to transfer the audio recordings to our servers in order to process the files. Recording quality may vary depending on your device. External microphones will probably increase the recording quality.

Follow this link to download the app for Android.

Follow this link to download the app for iOS.

Note: If you encounter any instabilities or have any question regarding the functionality, please let us know. We will add new features in the near future, you will receive all updates automatically.

About us:

Cornell Lab of Ornithology

Dedicated to advancing the understanding and protection of the natural world, the Cornell Lab joins with people from all walks of life to make new scientific discoveries, share insights, and galvanize conservation action. Our Johnson Center for Birds and Biodiversity in Ithaca, New York, is a global center for the study and protection of birds and biodiversity, and the hub for millions of citizen-science observations pouring in from around the world.

K. Lisa Yang Center for Conservation Bioacoustics

Based at the Cornell Lab of Ornithology, the K. Lisa Yang Center for Conservation Bioacoustics collects and interprets sounds in nature by developing and applying innovative conservation technologies across multiple ecological scales to inspire and inform conservation of wildlife and habitats. Our highly interdisciplinary team works with collaborators on terrestrial, aquatic, and marine bioacoustic research projects tackling conservation issues worldwide.

Chemnitz University of Technology

Chemnitz University of Technology is a public university in Chemnitz, Germany. With over 11,000 students, it is the third largest university in Saxony. It was founded in 1836 as Königliche Gewerbeschule (Royal Mercantile College) and was elevated to a Technische Hochschule, a university of technology, in 1963. With approximately 1,500 employees in science, engineering and management, TU Chemnitz counts among the most important employers in the region.

Chair of Media Informatics

The Chair of Media Informatics at Chemnitz University of Technology has been working on content-based analysis of large, heterogeneous data sets since 2007. In addition, the Chair of Media Informatics researches and teaches in the area of human-computer interaction, with a special focus on critical and inclusive interaction design, as well as novel (mobile) interaction modalities.

Meet the team:

Stefan Kahl

I am a postdoc within the K. Lisa Yang Center for Conservation Bioacoustics at the Cornell Lab of Ornithology and the Chemnitz University of Technology. My work includes the development of AI applications using convolutional neural networks for bioacoustics, environmental monitoring, and the design of mobile human-computer interaction. I am the main developer of BirdNET and our demonstrators.

Ashakur Rahaman

I am a research analyst within the K. Lisa Yang Center for Conservation Bioacoustics at the Cornell Lab of Ornithology and the community manager of the BirdNET app. I am actively involved in environmental conservation through scientific inquiries and public engagement. Understanding the relationship between natural sounds and the effects of anthropogenic factors on the communication space of animals is my passion.

Connor Wood

My primary interest as a postdoc within the K. Lisa Yang Center for Conservation Bioacoustics at the Cornell Lab of Ornithology is understanding how wildlife populations and ecological communities respond to environmental change, and thus contributing to their conservation. I use audio data collected during large-scale monitoring projects to study North American bird communities.

Kristin Brunk

As a postdoc within the K. Lisa Yang Center for Conservation Bioacoustics, I am using bioacoustics data about bird communities in California’s Sierra Nevada to model the occupancy of several focal bird species in response to habitat and fire conditions. These models and data will be used by managers to inform conservation decisions into the future, especially in the face of an uncertain climate.

Amir Dadkhah

I am a software developer and computer scientist at the Chair of Media Informatics at Chemnitz University of Technology with focus on applied computer science and human-centered design. I am the leading developer of the iOS version of the BirdNET app.

Holger Klinck

I am the John W. Fitzpatrick Director of the K. Lisa Yang Center for Conservation Bioacoustics at the Cornell Lab of Ornithology, Faculty Fellow with the Atkinson Center for a Sustainable Future at Cornell University, and adjunct assistant professor at Oregon State University. My current research focuses on the development and application of hardware and software tools for passive-acoustic monitoring of terrestrial and marine ecosystems and biodiversity.

Support us:

Donate

BirdNET is a research project that relies on external funding. We want to develop new features, add more species, expand our services, and most importantly, provide a great experience for birders and those who want to be one.

With your donation you can help us to achieve these goals.

Every amount is valuable! It helps us cover server costs and keep our research going.

Collaborate

Are you currently researching a topic where BirdNET could be helpful, or do you have an idea for a research project? Let us know! You would like to support us in the area of software and app development?

Please contact us.

We are open to your ideas and would love to talk with you.

Send us an email: ccb-birdnet@cornell.edu

Related publications:

Wood, C. M., Kahl, S., Chaon, P., Peery, M. Z., & Klinck, H. (2021). Survey coverage, recording duration and community composition affect observed species richness in passive acoustic surveys. Methods in Ecology and Evolution. [PDF]

Kahl, S., Wood, C. M., Eibl, M., & Klinck, H. (2021). BirdNET: A deep learning solution for avian diversity monitoring. Ecological Informatics, 61, 101236. [Source]

Kahl, S., Denton, T., Klinck, H., Glotin, H., Goëau, H., Vellinga, W. P., … & Joly, A. (2021). Overview of BirdCLEF 2021: Bird call identification in soundscape recordings. In CLEF 2021 (Working Notes). [PDF]

Joly, A., Goëau, H., Kahl, S., Picek, L., Lorieul, T., Cole, E., … & Müller, H. (2021). Overview of LifeCLEF 2021: An evaluation of machine-learning based species identification and species distribution prediction. In International Conference of the Cross-Language Evaluation Forum for European Languages (pp. 371-393). Springer, Cham. [PDF]

Kahl, S., Clapp, M., Hopping, W., Goëau, H., Glotin, H., Planqué, R., … & Joly, A. (2020). Overview of BirdCLEF 2020: Bird Sound Recognition in Complex Acoustic Environments. In CLEF 2020 (Working Notes). [PDF]

Joly, A., Goëau, H., Kahl, S., Deneu, B., Servajean, M., Cole, E., … & Lorieul, T. (2020). Overview of LifeCLEF 2020: A System-Oriented Evaluation of Automated Species Identification and Species Distribution Prediction. In International Conference of the Cross-Language Evaluation Forum for European Languages (pp. 342-363). Springer, Cham. [PDF]

Kahl, S. (2020). Identifying Birds by Sound: Large-scale Acoustic Event Recognition for Avian Activity Monitoring. Dissertation. Chemnitz University of Technology, Chemnitz, Germany. [PDF]

Kahl, S., Stöter, F. R., Goëau, H., Glotin, H., Planqué, R., Vellinga, W. P., & Joly, A. (2019). Overview of BirdCLEF 2019: Large-scale Bird Recognition in Soundscapes.

In CLEF 2019 (Working Notes). [PDF]

Joly, A., Goëau, H., Botella, C., Kahl, S., Servajean, M., Glotin, H., … & Müller, H. (2019). Overview of LifeCLEF 2019: Identification of Amazonian plants, South & North American birds, and niche prediction.

In International Conference of the Cross-Language Evaluation Forum for European Languages (pp. 387-401). Springer, Cham. [PDF]

Joly, A., Goëau, H., Botella, C., Kahl, S., Poupard, M., Servajean, M., … & Schlüter, J. (2019). LifeCLEF 2019: Biodiversity Identification and Prediction Challenges.

In European Conference on Information Retrieval (pp. 275-282). Springer, Cham. [PDF]

Kahl, S., Wilhelm-Stein, T., Klinck, H., Kowerko, D., & Eibl, M. (2018). Recognizing Birds from Sound – The 2018 BirdCLEF Baseline System.

arXiv preprint arXiv:1804.07177. [PDF]

Goëau, H., Kahl, S., Glotin, H., Planqué, R., Vellinga, W. P., & Joly, A. (2018). Overview of BirdCLEF 2018: monospecies vs. soundscape bird identification.

In CLEF 2018 (Working Notes). [PDF]

Kahl, S., Wilhelm-Stein, T., Klinck, H., Kowerko, D., & Eibl, M. (2018). A Baseline for Large-Scale Bird Species Identification in Field Recordings.

In CLEF 2018 (Working Notes). [PDF]

Kahl, S., Wilhelm-Stein, T., Hussein, H., Klinck, H., Kowerko, D., Ritter, M., & Eibl, M. (2017). Large-Scale Bird Sound Classification using Convolutional Neural Networks.

In CLEF 2018 (Working Notes). [PDF]